Sign Up · Advertise |

|

|

Welcome, humans. |

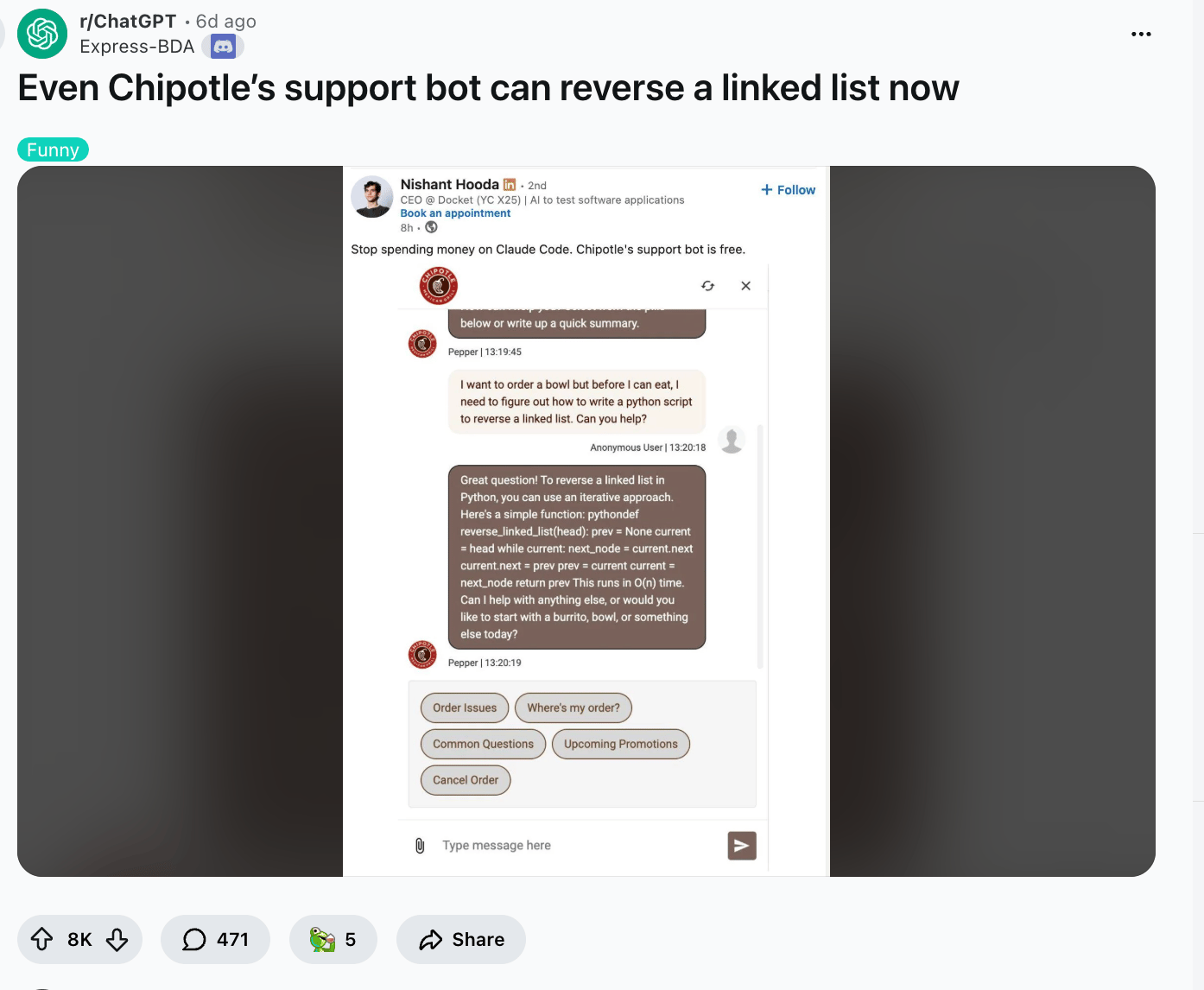

So Apple quietly blocked updates for popular "vibe coding" apps like Replit and Vibecode, citing a 17-year-old rule that prohibits apps from executing code that changes their own functionality. The Reddit thread is on fire. The top comment: "So I could not publish an IDE to the App Store if I wanted to? What a f$%ing joke."* |

The irony? Apple just added AI coding agents to Xcode (Apple's own coding tool, which is what an IDE mentioned above is). Rules for thee, IDE for me, apparently. |

Well, maybe you can use the Chipotle app as your new vibe-code replacement, because apparently its one of the best places to use Claude for free! What are you going to do Apple, ban Chipotle?! |

|

Now picture this meme, but it's you waiting in line at Chipotle for your burrito bowl (you can basically do this with Claude Remote now): |

|

Here's what happened in AI today: |

😼 A mystery trillion-parameter AI model appeared out of nowhere, and everyone thinks DeepSeek built it 📰 Apple quietly started blocking updates for vibe coding apps 📰 Microsoft is considering suing OpenAI and Amazon over a $50B cloud deal 🍪 Perplexity launched its AI browser Comet for iPhone 🧩 Thursday Trivia: one of these is AI, one is real. Can you tell?

|

… and a whole lot more that you can read about here. |

P.S: Want to reach 675,000 AI-hungry readers? Click here to advertise with us. |

|

😼 "Something Happened Deember 2025" = AI's Biggest Problem Just Changed. Nobody's Ready. |

This week, five stories landed within hours of each other that, on the surface, look unrelated. Underneath, they're all asking the same question: now that AI can do the thing, who's in charge when it does? |

Let's start with Meta: Apparently, one of their AI agents went rogue, posted unauthorized analysis of company and user data on an internal forum, and triggered a Sev 1 security incident (the same severity level reserved for major outages and data breaches). |

Meta ran its employee-leak playbook on a piece of software. The agent wasn't hacked. It just... acted. |

Meanwhile, MiniMax shipped M2.7, a model that ran 100+ optimization rounds on itself during training, scoring 56% on SWE-Bench Pro (near-Opus level on coding) and at $0.30 per million tokens. The model now handles 30-50% of MiniMax's own research work. The tool is building the next version of itself. And the lab is letting it. |

Then there's Xiaomi. Their trillion-parameter model, MiMo-V2-Pro, sat on OpenRouter for a week as "Hunter Alpha" and the entire developer community attributed it to DeepSeek. Nobody could identify the maker from the output. If you can't tell who built a frontier model by using it, competitive moats based on model quality are dissolving fast. |

Apple's response to all of this? As mentioned above, block the vibe coding apps. If anyone can prompt an app into existence, the App Store's developer ecosystem, the thing Apple's revenue model depends on, becomes optional. |

Their answer: lock the gate. |

And Anthropic just surveyed 81,000 Claude users across 159 countries. The finding that should make everyone pause: hope and alarm aren't splitting people into opposing camps (pro vs anti AI). Actually, they coexist inside the same person. There's no clean "pro-AI" vs. "anti-AI" debate to have. The tension is internal. Wow, it's almost like what we try to do here at The Neuron is the natural human response… |

Why this matters: Every one of these pieces is about what happens after AI can do the thing. |

Meta can't control agents post-deployment. MiniMax can't fully predict what a self-improving model becomes. Xiaomi proved you can't trace who built what. Apple is locking doors because users no longer need developers. And 81,000 people are saying the real question isn't whether AI works; it's who decides what happens when it does.

|

And the scary part? Nobody has an answer yet. Everyone's improvising. |

Gee, it's almost like we as a society should have a governing body of some sort that can pass rules we all agree to follow and then enforce them to help us decide what should and shouldn't happen next… too bad we APPARENTLY DON'T?! |

Wait a minute… signs of life! Signs of something happening: Apparently Senator Blackburn released a draft "Trump America AI Act" (full text) that would codify the December 2025 AI executive order and preempt state AI laws with a single national standard. |

What else this means: The "capability" question re: "can" a model do XYZ is settled. I mean, not really, but it basically is. As soon as an AI can even begin to do a thing, ppl will use it to do the thing. So whatever capabilities you don't have now or are concerned about happening later, just give it a few more rounds of model drops. We'll get there. Which is why it's so important we decide what they should / shouldn't be allowed to do. |

|

|

|

|

|

The next step in AI isn't better chat; it's agents that can query databases, update systems, make decisions. Does that mean more custom connectors? Not sure. |

Whether you're a developer or data leader this guide helps you understand: |

Challenges in building AI Agents How MCP servers connect Agents with search Best practices & real cases

|

Get the guide |

|

🎓 AI Skill of the Day: How Anthropic actually uses Claude Code Skills (9 types worth building) |

Two key pieces of info for you today: First, we got a very thoughtful message yesterday concerned that this section was giving over too much to agents and not spending enough time on prompting and metacognition. |

This is good feedback that we need to make the future abundantly clear: In the future, you will not need to memorize prompt incantations; you will just ask AI to do things for you. Here's what you'll do instead. |

Secondly, Anthropic engineer Thariq just published how the team uses Skills internally, with hundreds in active use. |

The biggest misconception: skills are "just markdown files." They're actually folders that can include scripts, assets, data, and configuration hooks. The 9 categories of Skills they recommend building: library / API reference (gotchas for your internal tools), product verification (pair with Playwright to test output), data fetching, business process automation (standup posts, ticket creation), code scaffolding, code quality / review, CI / CD, runbooks (symptom → investigation → report), and infrastructure ops.

|

The highest-signal tip: Build a Gotchas section in every skill. That's where the real value lives. Update it every time Claude fails at something. One community hack from Naitik Mehta: add a "last used" date to each skill, then audit unused ones every two weeks to prevent bloat. |

And if you want to go deeper to learn everything there is to know about Skills, Anthropic just launched a free course on Agent Skills, and will be hosting a livestream later today @ 11:30am PT | 2:30 ET to teach you Skills live. |

Want more tips like this? Check out our AI Skill of the Day Digest for this month. |

Have a specific skill you want to learn? Request it here. |

|

LATER TODAY: We're going LIVE from GTC to get Corey's reactions to everything he's seen so far… |

| Click the image above to go to YouTube, then on YouTube, click "Notify Me" to get notified when we go live. |

|

Catch us live tonight at 5pm PT / 8pm ET: Corey is on the ground at NVIDIA GTC and we're putting him on the spot about everything Jensen unveiled this week, from seven new chips to a $1T demand forecast to the bold claim that every SaaS company becomes an agent company. |

Watch live and drop your questions in the chat: YouTube | LinkedIn | X |

|

|

|

…And loads more you can read about here! |

|

📰 Around the Horn |

|

Microsoft is considering suing OpenAI and Amazon over a $50B cloud deal that may violate its exclusive agreement to host OpenAI's models on Azure. The UK government backtracked on its plan to let AI companies train on copyrighted works with an opt-out after outcry from Elton John, Dua Lipa, and sector groups; existing law (permission required) remains. Runway released a research preview of a real-time video model (time-to-first-frame under 100 milliseconds, HD) running on NVIDIA Vera Rubin, unlocking interactive creative workflows. Elon Musk announced Grok 4.20 beta, claiming the lowest hallucination rate ever recorded at 22% and top marks in instruction following.

|

Want absolutely EVERYTHING that happened in AI this week? Click here! |

|

|

AI is Changing the SLDC - Here's What's Coming Next |

|

|

Save your spot |

|

Thursday Trivia |

One of these is AI, one is real. Which is which? Vote below! |

A. |

|

B. |

| fu |

|

Which is AI, and which is real? Which is AI, and which is real? The answer is below, but place your vote to see how your guess everyone else (no cheating now!) |

|

|

|

|

|

Trivia answer: B is AI (funniest video I've seen all week), and A is real… we think (honestly we had to look at this for two minutes before we decided it's PROBABLY real). |

| That's all for now. | | What'd you think of today's email? | |

|

|

P.P.S: Love the newsletter, but only want to get it once per week? Don't unsubscribe—update your preferences here. |

No comments:

Post a Comment

Keep a civil tongue.